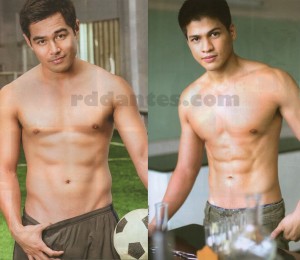

Cut from the top 10 list were Benjamin Alves [left] and Vin Abrenica. Although they did not get included in the list, they somehow managed to get full-page features in the supplementary pages of this month’s Cosmopolitan [Phils] magazine’s issue. Now, if only they’d be daring in the live Cosmo Bash this September!

Comments 42

Leave a Reply Cancel reply

This site uses Akismet to reduce spam. Learn how your comment data is processed.

That’s okay. The concept is so lame anyway. Nothing new, we see them half-naked that way on tv already. So why waste money for an issue that does not provide something else?

Agree

I love you Ben… very nice eyes!

first to comment!

epic fail,, ,, ,,

Yummies!

Oh my God Alden! Fuck me pleeease! :'(

Uhm… san si Alden dito?

Parang may kung anong laman ang nasa loob ng shorts ni Benjamin.

True sis!!

parang may jack stone. o may modess?

Naeelya ako sobra kay Benjamin!

Lower BENJAMIN, lowerrrrrr

aj will always top vin, no matter what. same with rayver as to rodjun.

Oo nga nakakaumay na…

Ymmmy boys!

Mas deserving si Ben naman kesa kay Rayver at Sam sa list

Siguro nga pinag nanasaan ni Pioling ang pamangkin nya hahahahahha

Thanks RD I love these boys!

Cute and sexy boys! I want Ben!

Sana man lang pinagsuot sila ng bikini hehehe

o pinaghubad na. wala bang bago sa cosmo?

Mukhang maloko itong Benjamin na ito. Very naughty look.

Ewan ko sa inyo pero nalilibugan ako kay Vin sobra!

Ewan ko sa inyo pero nalilibugan ako kay Vin sobra!

Dapat sila na lang in place of rayver and Sam sa top 10

Wow sarap ni Benjamin dito may bukol pa hahahaha

Oo nga partida na yan na loose shorts gamit ni Benjamin. Kitang kita pa din yung parang chewy bulge inside.

bulge ba yun? parang may kotex.

Mukhang napakabango ng singit nitong Benjamin nato! Type!!!

Vin Abrenica is growing on me na din. Sexy say ha.

these boys should have been in the top 10 list

Magsusuot daw ng Speedos si Vin sa show on the 24th! Go!!!

I love Ben. Fresh yet naughty looks

May bumubukol o Benjamin!!!

Di ba may kasabihan na pag malaki ilong, malaki titi? Vin ABRENICA!!!

Cute chinito! Major like!!

ben is hot =)

may deserving pa ang dalawang toh kaysa dun sa ibang nasa centerfolds noh! ano bang criteria ng cosmo sa pagpili haller.

well deserved cut..its hunks right ..not hunkettes? benjamin alves? hahaha

I like Vin, too. He’s sexy in Misibis Bay. Ben should have been included in Cosmo mag top 10 instead od Sam Concepcion.

Nakakagigil si Benjamin!!!